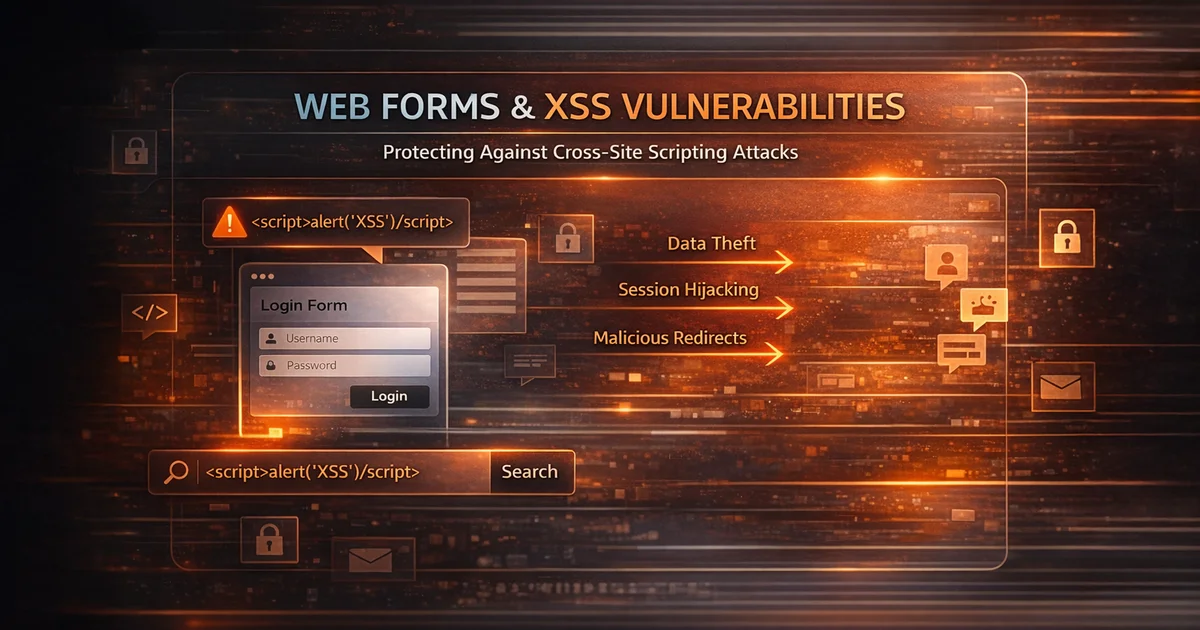

Web forms are one of the most common ways users interact with websites. Contact forms, login fields, comment sections, search bars, support portals, checkout pages, registration flows, and profile settings all depend on user input. That makes forms essential for modern web applications, but it also makes them one of the most common places where security weaknesses appear. When user-controlled data is not handled safely, attackers may be able to inject malicious scripts and exploit cross-site scripting vulnerabilities.

XSS remains one of the most important web application security issues because it can affect both simple websites and complex platforms. A single vulnerable field can allow attackers to execute malicious JavaScript in a victim’s browser, manipulate page content, steal session tokens, capture credentials, or redirect users to malicious destinations. Many organizations assume they are protected because they apply a few input filters or rely on framework defaults, but weak filtering alone is not enough.

Input sanitization is an important part of reducing this risk, especially when applications accept user-supplied text that may later be displayed to other users or administrators. However, sanitization is only one layer. Effective XSS prevention also requires strict input validation, context-aware output encoding, secure frontend rendering practices, supporting browser-side protections, and regular testing.

For teams that want to combine secure coding with repeatable verification, vulnerability assessment platforms can help identify exposed risk before attackers do. A website vulnerability scanner helps security teams review public-facing application behavior, investigate possible XSS exposure, and validate whether form-related improvements reduce risk over time.

What Is Cross-Site Scripting (XSS)?

Cross-site scripting, usually shortened to XSS, is a vulnerability that allows attackers to inject malicious client-side code into web pages viewed by other users. In most cases, the payload is JavaScript, although other browser-executed content can also play a role. When the vulnerable page loads, the browser runs the attacker’s code as though it were trusted application content.

This is dangerous because browsers trust content delivered within the security context of the application’s domain. If a malicious script executes inside that trusted context, it may access cookies, session identifiers, local storage, page content, form fields, or user actions. Depending on the application and browser protections in place, attackers may use XSS to steal sessions, impersonate users, modify on-page content, perform actions on behalf of the victim, or deliver additional payloads.

XSS is often described as an “input problem,” but in reality it is usually a data-handling problem across multiple stages. The application accepts user-controlled input, processes or stores it, and later renders it into a page, attribute, script block, or DOM operation without the correct protection. That is why safe form handling is such a critical part of preventing XSS.

Why Web Forms Are Common XSS Entry Points

Web forms are natural XSS targets because they are specifically designed to receive data from users. Every text field, textarea, search box, name field, profile setting, message body, or support request creates a possible path through which attacker-controlled content can enter the application. If that content is later rendered without proper handling, the browser may interpret it as executable code instead of plain text.

Forms become especially risky when submitted data is reused in multiple places. A search term may be reflected into the results page. A comment may be stored and shown to other visitors. A support message may be displayed in an administrative dashboard. A profile field may appear in public listings, account pages, exports, or email templates. Once user-controlled content moves from submission to rendering, the XSS risk depends entirely on how safely the application handles it.

Another reason forms are frequently vulnerable is that teams often focus on usability and functionality before security. Developers may validate required fields, length, and formatting, but fail to think about how that same data will be rendered later. A field that correctly accepts text can still become dangerous if the application later inserts that text into HTML, attributes, JavaScript, or the DOM without the correct output protection.

Types of XSS That Affect Forms

Reflected XSS

Reflected XSS occurs when attacker-controlled input is returned immediately in the server response and rendered back into the page. The payload is not stored permanently by the application. Instead, it is reflected as part of the response to a crafted request, often through query parameters, search inputs, form submissions, or error messages.

This type of XSS is common in search results pages, login flows, password reset pages, and form validation messages where the application displays submitted values back to the user. If those values are rendered without correct encoding, a malicious script may execute in the browser when the victim loads the page.

Example: Search Form Reflection

A website may show a message such as:

You searched for: test query

If the application inserts the search parameter directly into the page without proper encoding, an attacker might craft a malicious request like:

https://example.com/search?q=<script>alert(1)</script>

If the server reflects that value unsafely, the browser may execute the script instead of displaying it as text. In practical security testing, scanners can help identify parameters where user-controlled input is reflected into the response. That gives security teams a starting point to investigate whether the value is being rendered safely or whether the behavior suggests reflected XSS exposure.

Stored XSS

Stored XSS occurs when malicious input is accepted by the application, saved, and later displayed to users. This is often more dangerous than reflected XSS because the victim does not need to visit a specially crafted link. Once the payload is stored in the application, every user who loads the affected content may be exposed.

Stored XSS commonly appears in comment sections, support portals, forums, product reviews, user biographies, messaging systems, ticket platforms, and any workflow where user-submitted content is stored and later rendered elsewhere. If output encoding is missing at the point of display, stored content can become an ongoing attack vector.

Example: Contact Form Payload in an Admin Dashboard

Consider a contact form that allows visitors to submit support messages. Those messages may later appear inside an internal administrative dashboard used by staff to review requests. If a malicious user submits a script payload and the dashboard displays that message without proper encoding, the code may execute inside the administrator’s browser.

For example, an attacker might submit a payload intended to capture accessible session information or manipulate the page. Even if browser protections limit direct cookie access, stored XSS inside an admin context may still be severe because privileged users often have access to sensitive features and workflows. This is why teams must treat all user input as untrusted, including content displayed only to internal staff.

Security scanning does not replace code review here, but it can help surface suspicious rendering behavior and identify exposed application flows that deserve closer investigation. If user-submitted content is reflected or reused in risky ways, that signal is worth reviewing immediately.

DOM-Based XSS

DOM-based XSS occurs in the browser when client-side JavaScript reads attacker-controlled input and writes it into the page using unsafe methods. In these cases, the vulnerability may exist entirely in frontend logic rather than in the server-rendered response. This makes DOM-based XSS particularly relevant in modern single-page applications and dynamic frontend frameworks.

For example, a script may read a value from the URL or a form field and insert it into the page using an unsafe DOM API such as innerHTML. If that value contains malicious markup or script, the browser may execute it. Because this behavior is introduced by client-side code after the page loads, it can be harder to spot during simple page-source inspection.

DOM-based XSS often appears when developers bypass safer rendering patterns for convenience. Even teams using modern frameworks can introduce XSS if they use raw HTML insertion, unsafe templating shortcuts, or direct DOM manipulation with untrusted data.

Input Validation vs Input Sanitization vs Output Encoding

These three concepts are closely related, but they are not interchangeable. Confusing them is one of the most common reasons teams believe they have prevented XSS when they have only reduced one part of the risk. Understanding the difference is essential for building secure web forms.

Input Validation

Input validation checks whether submitted data matches the expected format, type, structure, or length. A phone number field should contain allowed phone-number characters. An age field should contain numeric input within a valid range. An email field should match an email format. Validation helps reject clearly invalid data before it moves deeper into the application.

Validation is important, but it does not stop XSS on its own. A malicious payload may still pass validation if the field is allowed to contain general text, punctuation, or rich formatting. Validation reduces attack surface and improves data quality, but it should not be treated as the only XSS defense.

Input Sanitization

Input sanitization modifies or cleans submitted content to remove, neutralize, or restrict dangerous constructs. This may include stripping disallowed HTML tags, removing event handlers, normalizing suspicious markup, or transforming unsafe input before it is stored or processed. Sanitization is especially relevant when applications need to allow some user formatting while preventing dangerous behavior.

However, sanitization has limitations. Weak sanitizers are often bypassed, and custom filters based on simple pattern matching are especially fragile. Sanitization should therefore be viewed as a supporting control rather than the whole solution. If sanitized content is later rendered in a sensitive context, the application still needs the correct output protection at display time.

Output Encoding

Output encoding is one of the most important XSS defenses because it protects user-controlled data at the point where it is rendered. Instead of allowing special characters to be interpreted as HTML or script syntax, the application converts them into a safe representation. This causes the browser to display the content as text rather than execute it.

Output encoding must always match the rendering context. HTML body content, HTML attributes, JavaScript strings, CSS values, and URLs all require different handling. This is why teams that rely only on sanitization often remain vulnerable. Correct context-aware output encoding is what breaks the path between attacker input and browser execution.

Secure Web Form Design Principles

Treat All Input as Untrusted

No field should be assumed safe just because it looks harmless. Names, email addresses, search terms, usernames, support messages, and hidden fields can all contain attacker-controlled content. Even authenticated users may submit malicious input, and attackers can modify requests before they reach the server.

Once a team consistently treats all input as untrusted, security decisions become more disciplined across the application lifecycle. That mindset should extend from submission to storage to rendering, including internal dashboards, exports, notifications, and administrative tools.

Use Allowlist Validation Where Possible

When a field has a clear purpose, allowlist validation is usually safer than trying to block dangerous patterns. A postal code field should allow the specific character patterns expected for postal codes. A quantity field should allow numeric values within a range. A username field may allow letters, numbers, underscores, and limited punctuation.

Blocklists are weaker because they depend on predicting dangerous input patterns, and attackers routinely find ways around them. Allowlist validation reduces ambiguity and makes defensive logic easier to maintain.

Avoid Rendering Raw User HTML Unless It Is Truly Required

If an application does not genuinely need to support user-supplied HTML, it should not allow it. Plain text is easier to secure, easier to reason about, and less likely to create dangerous edge cases. Many XSS issues begin because applications accept richer formatting than the business use case actually requires.

If rich text is necessary, use a trusted HTML sanitization library designed specifically for security. Avoid custom regular-expression filters for cleaning HTML because they are unreliable and easy to bypass.

Encode on Output, Not Just on Input

Even if data has been validated and sanitized, it should still be encoded correctly when rendered. This is especially important because the same value may appear in multiple places and in multiple contexts. A value that appears harmless in a paragraph may become dangerous if later inserted into an HTML attribute, JavaScript block, or DOM fragment.

Encoding on output is what ensures the browser interprets the value as data rather than executable code. This is one of the strongest ways to stop a submitted payload from becoming an XSS exploit.

Prefer Safe Framework and Rendering Patterns

Modern frameworks often help reduce XSS risk by escaping output automatically, but only if developers stay within the safer rendering model. Problems appear when teams bypass these protections with raw HTML rendering, unsafe DOM manipulation, or convenience shortcuts that disable escaping.

Framework choice helps, but security still depends on disciplined implementation. Unsafe rendering patterns can reintroduce XSS even inside otherwise secure application stacks.

Common Developer Mistakes That Still Lead to XSS

Relying Only on Client-Side Validation

Client-side validation improves user experience, but it cannot be trusted for security. Attackers can bypass JavaScript checks, intercept requests, or submit crafted payloads directly to the backend. Any important validation or sanitization rule must also be enforced server-side.

Using Weak Blacklist Filters

Some applications try to prevent XSS by removing a few special characters or blocking obvious strings such as the word script. This is not enough. Browsers are flexible, and attackers can encode, split, or restructure payloads in many ways. Weak blacklists may block only the simplest examples while leaving real attack paths open.

Using Unsafe DOM APIs

Client-side code often becomes vulnerable when developers use methods such as innerHTML, outerHTML, or unsafe template insertion with untrusted data. These APIs can transform a plain string into active markup. Safer APIs such as textContent should be preferred when the goal is to display text rather than parse HTML.

Trusting Internal or Admin-Only Interfaces

Teams sometimes believe that internal dashboards do not need the same protections as public pages. This assumption is dangerous. Stored XSS is often especially harmful in administrative interfaces because privileged users may have access to sensitive functions, elevated permissions, or broader visibility into the system.

Forgetting Secondary Render Paths

A field may appear safe on the original page but become dangerous elsewhere. Support messages may appear in admin panels. Profile fields may appear in exports. Search terms may appear in logs or reports. User-submitted content may also end up inside email templates, previews, or client-side widgets. Security review must follow data through all the places where it is rendered, not just the initial form submission.

Additional Controls That Strengthen XSS Defense

Content Security Policy

Content Security Policy, or CSP, helps reduce the impact of XSS by restricting which scripts the browser is allowed to execute. A well-designed CSP can block inline scripts, limit script loading to trusted sources, and make it much harder for injected code to run successfully. It is not a replacement for secure coding, but it is an important supporting layer.

Example: Weak CSP Allowing Inline Script Execution

If an application allows unsafe inline scripting through a weak policy, an attacker may still be able to execute malicious code even when some input filtering exists. In other words, an already risky rendering path becomes even easier to exploit when browser-side protections are weak or missing.

This is where adjacent testing becomes useful. Tools available on the Vulnify free tools page, particularly security header analysis, help teams review whether public-facing protections such as CSP are missing or poorly configured. Stronger headers do not replace proper output encoding, but they do strengthen the overall defensive posture.

HttpOnly and Secure Cookies

Cookies marked with the HttpOnly flag cannot be read directly by client-side JavaScript. This can reduce the value of some XSS attacks by making direct cookie theft harder. The Secure flag also helps ensure sensitive cookies are sent only over encrypted connections.

These controls do not prevent XSS from existing, but they can reduce the damage caused by successful exploitation. That is why they should be treated as part of a layered security model rather than a standalone fix.

Framework and Dependency Hygiene

Outdated frontend libraries, plugins, and rendering helpers can introduce DOM-based XSS issues or unsafe behavior even when application code appears disciplined. Teams should maintain dependency hygiene and review security updates regularly. A weakness may come not only from the form-handling code itself, but also from the library used to render the submitted data afterward.

How to Test Forms for XSS Exposure

Effective testing should combine manual review with automated validation. Manual review helps developers understand where input enters the application, how it is transformed, and where it is rendered later. Automated testing helps scale that review across many parameters, pages, and entry points that may be difficult to assess consistently by hand.

When testing forms for XSS exposure, teams should review visible inputs, hidden parameters, search fields, comment systems, profile fields, support forms, client-side rendering flows, and any place where user-controlled values may appear in HTML or the DOM. Special attention should also be paid to administrative interfaces and secondary render paths because these are common places where stored content becomes dangerous.

Security scanners can help identify suspicious reflections, risky response patterns, and exposed application behavior that deserves closer analysis. They do not replace secure coding or code review, but they are extremely useful for finding places where application behavior suggests XSS risk. For broader security education and related topics, the Vulnify security blog also supports internal content discovery across the site.

How Vulnify Helps Identify XSS Risks

Preventing cross-site scripting ultimately depends on secure development practices such as strong validation, safe output encoding, secure templating, and careful frontend rendering. However, security teams still need a practical way to verify whether exposed applications may contain XSS weaknesses. This is where vulnerability scanning platforms such as Vulnify are useful.

Vulnify does not rewrite application code or automatically sanitize form inputs. Instead, it helps organizations identify exposed attack surfaces, detect potentially risky behavior across public-facing application flows, and verify whether remediation changes appear to reduce risk when the application is re-tested.

Example 1: Reflected Input in a Search Form

Suppose a website has a search form that displays the submitted query inside the results page. If the application reflects that value back into the page without correct encoding, an attacker may be able to abuse that behavior for reflected XSS.

In this scenario, security testing can help identify that the parameter is reflected into the response and deserves closer analysis. Vulnify can support this workflow by helping security teams review exposed parameters, investigate reflection behavior, and decide whether the application is safely handling user-controlled input.

Example 2: Stored Input in an Administrative Workflow

Imagine a contact form where submitted content is later displayed in a staff dashboard. If that dashboard renders message content unsafely, a malicious submission could become a stored XSS issue affecting internal users.

This is not something that should be described as “fixed by scanning,” because the real fix is still in the application code. However, testing helps reveal where risky rendering paths may exist. Vulnify supports that investigative process by helping teams examine exposed workflows and identify application behavior that may indicate stored XSS risk.

Example 3: Browser-Side Protection Review

Even when developers implement stronger validation and encoding, browser protections still matter. If Content Security Policy is weak or missing, a successful XSS issue may be easier to exploit. In this case, Vulnify’s public tooling is relevant because security header analysis helps teams review whether browser-side controls such as CSP are present and reasonably configured.

This makes the platform useful not only for identifying possible injection-related exposure, but also for reviewing supporting controls that reduce the impact of successful XSS attacks.

Example 4: Verifying Fixes After Remediation

After developers improve input handling, strengthen encoding, or tighten frontend rendering logic, security teams should verify that the public-facing behavior has improved. Automated re-testing helps confirm whether previously identified risky patterns still appear to be exposed.

This is where repeatable scanning becomes especially valuable. Teams can fix an issue, re-test affected application flows, and compare whether exposure appears reduced. Additional platform guidance around workflows and reporting can be explored in the Vulnify documentation.

Conclusion

Input sanitization is an important part of XSS prevention, but it is not the complete answer. Secure web forms depend on multiple layers working together, including strict validation, safe output encoding, disciplined frontend rendering, supporting browser-side protections, and ongoing testing. When teams rely on weak filters alone, they often create a false sense of security while leaving real exploit paths open.

Because web forms remain one of the most common entry points for reflected, stored, and DOM-based XSS, they should be treated as a high-priority area for both developers and security teams. By treating all input as untrusted, minimizing unsafe rendering patterns, and reviewing how data moves through the full application lifecycle, organizations can dramatically reduce risk.

Strong coding practices prevent many XSS problems before they ever reach production, but testing remains essential because real applications are complex and secondary render paths are easy to miss. The most effective strategy is to combine secure development with repeatable verification. That is what turns form security from a checklist item into a practical defense model.